Problem statement

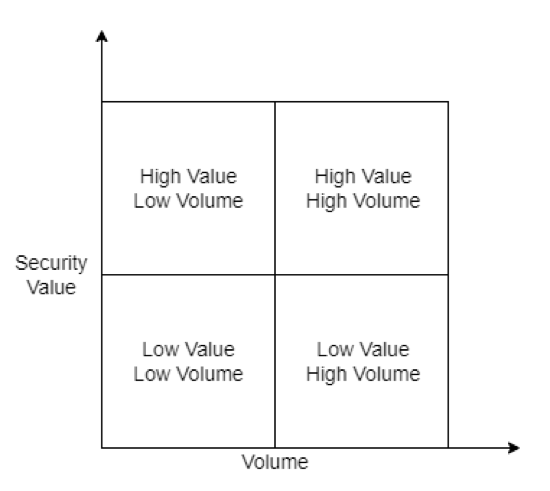

Data prioritization is an issue that any SIEM or data gathering and analysis solution must consider. The log that we collect to SIEM is typically security-related and capable of directly creating alerts based on the event of that log, such as EDR alerts. However, not all logs are equally weighted.

For instance, the proxy log only contains the connections of internal users, which is very useful for investigation. Still, it does not directly create alerts and has a high volume. To demonstrate this, we categorize the log into primary and secondary logs based on their security value and volume.

The metadata and context of what was discovered are frequently contained in the primary log sources used for detection. However, secondary log sources are sometimes required to present a complete picture of a security incident or breach. Unfortunately, many of these secondary log sources are high-volume verbose logs with little relevance for security detection. They are not useful unless a security issue or threat search requires them. On the current traditional on-premise solution, we will use SIEM alongside a data lake to store secondary logs for later use.

Because we have complete control over everything, we can use any technology or solution, making it simple to set up (Eg. Qradar for SIEM and ELK for data lake). However, this becomes more difficult for cloud-naive SIEM, particularly with Microsoft Sentinel. Microsoft Sentinel is a cloud-native security information and event manager (SIEM) platform that includes artificial intelligence (AI) to help with data analysis across an enterprise. We typically use Log Analytics with the Analytics Logs data plan to store and analyze everything for Sentinel. However, this is prohibitively expensive, costing between $2.00 to $2.50 per GB ingested per day, depending on the Azure region used.

Current Solution

Storage Account (Blob Storage)

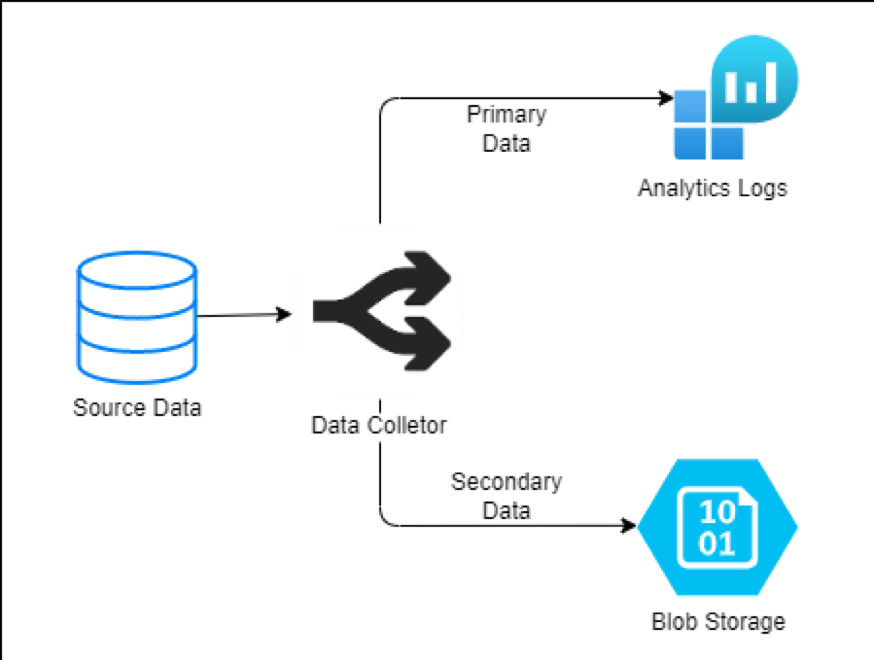

To store these secondary data, the present approach uses Blob Storage. Blob storage is designed to hold large volumes of unstructured data, which implies it does not follow a particular data model or specification, such as text or binary data. This is a low-cost option for storing large amounts of data. The architecture for this solution is as follows:

However, Blob Storage has a limitation that is hard to ignore. The data in Blob Storage is not searchable. We can circumvent this by using as demonstrated in Search over Azure Blob Storage content, but this adds another layer of complexity and pricing that we would prefer to avoid. The alternative option is to use KQL externaldata, but this is designed to obtain small amounts of data (up to 100 MB) from an external storage device, not massive amounts of data.

Our Solution

High-Level Architecture

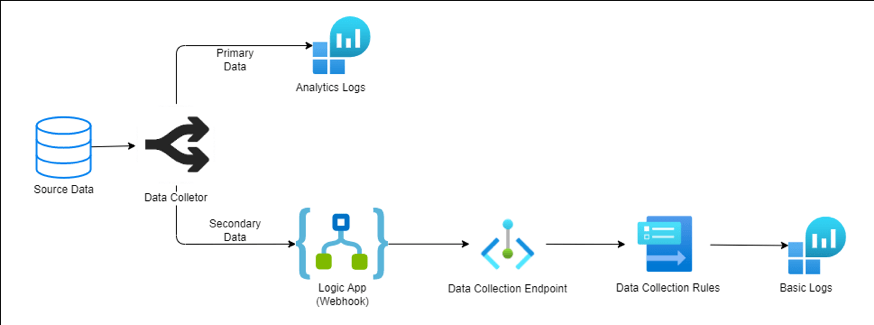

Our solution used Basic Logs to tackle this problem. Basic Logs is a less expensive option for importing large amounts of verbose log data into your Log Analytics workspace. The Basic log also supports a subset of KQL, making it searchable. To get the log into the Basic Log, We need to use a Custom table generated with the Data Collection Rule (DCR)-based logs ingestion API. The structure is as follows:

Our Experiment

In our experiment, we use the following component for the architecture:

|

Component |

Solution |

Description |

|

Source Data |

VMware Carbon Black EDR |

Carbon Black EDR is an endpoint activity data capture and retention solution that allows security professionals to chase attacks in real-time and observe the whole attack kill chain. This means that it captures not only data for alerting, but also data that is informative, such as binary or host information. |

|

Data Processor |

Cribl Stream |

Cribl helps process machine data in real-time - logs, instrumentation data, application data, metrics, and so on - and delivers it to a preferred analysis platform. It supports sending logs to Log Analytics, but only with the Analytics plan. |

To send the log to the Basic plan, we need to set up a data collection endpoint and rule, please see Logs ingestion API in Azure Monitor (Preview) for additional information on how to set this up. And we also use a Logic App as a webhook to collect the log and send it to the Data collection endpoint.

The environment we use for log generation is as follows:

- Number of hosts: 2

- Operation System: Windows Server 2019

- Number of days demo: 7

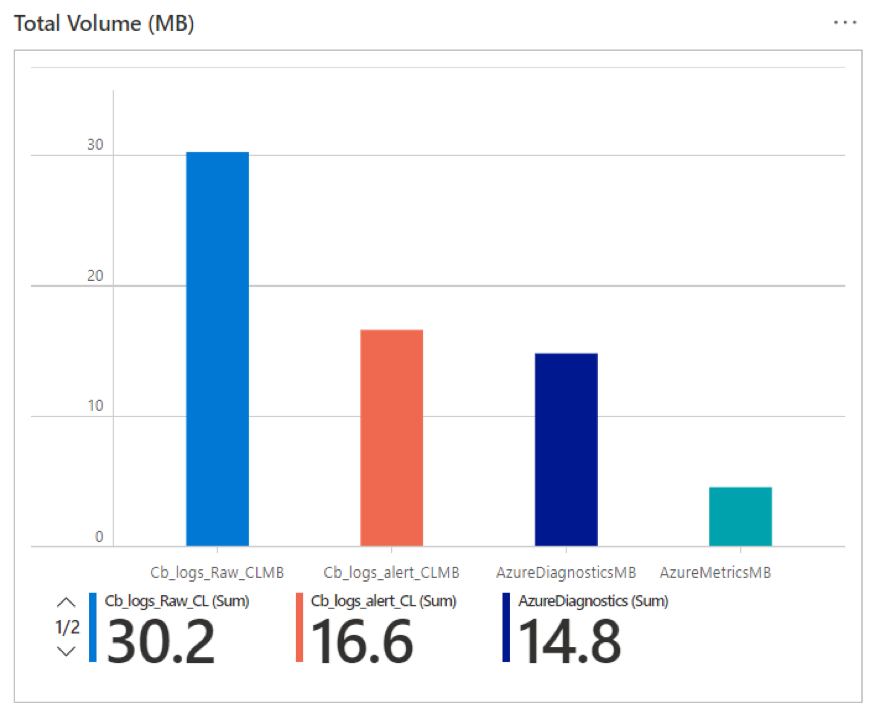

The number of logs we collected for our test environment are:

- Basic Log generated: 30.2 MB

- Alerts generated: 16.6 MB

The cost is based on the East US region, the currency is the USD, and the Pay-As-You-Go Tier was used to determine the number saved using the generated data with 1000 hosts and 30 days retention period.

The calculation using only Analytic Log

|

Table |

Ingestion Volume (GB) |

Cost per GB (USD) |

Total cost per day (USD) |

Total cost per retention period (USD) |

Host number |

Retention (Days) |

|

Cb_logs_Raw_CL |

2.16 |

2.3 |

4.96 |

148.84 |

1000 |

30 |

|

Cb_logs_alert_CL |

1.19 |

2.3 |

2.73 |

81.81 |

1000 |

30 |

|

|

|

Total |

7.69 |

230.66 |

|

|

If we use Analytic Log with Storage Account

|

Table |

Ingestion Volume (GB) |

Cost per GB (USD) |

Total cost per day (USD) |

Total cost per retention period (USD) |

Host number |

Retention (Days) |

|

Cb_logs_Raw_CL |

2.16 |

0.02 |

0.04 |

1.29 |

1000 |

30 |

|

Cb_logs_alert_CL |

1.19 |

2.3 |

2.73 |

81.81 |

1000 |

30 |

|

|

|

Total |

2.77 |

83.11 |

|

|

If we use Analytic Log with Basic Log

|

Table |

Ingestion Volume (GB) |

Cost per GB (USD) |

Total cost per day (USD) |

Total cost per retention period (USD) |

Host number |

Retention (Days) |

|

Cb_logs_Raw_CL |

2.16 |

0.5 |

1.08 |

32.36 |

1000 |

30 |

|

Cb_logs_alert_CL |

1.19 |

2.3 |

2.73 |

81.81 |

1000 |

30 |

|

|

|

Total |

3.81 |

114.17 |

|

|

Now let’s compare these 3 solutions together and get an overall look altogether.

|

|

Only Analytic Log |

Analytic Log with Storage Account |

Analytic Log with Basic Log |

|

Cost calculated |

$230.66 |

$83.11 |

$114.17 |

|

Searchable |

Yes |

No |

Yes but cost $0.005 per GB |

|

Retention |

Up to 2,556 days (7 years) |

146,000 days (400 years) |

Up to 2,556 days (7 years) |

Limitation

Even though the Basic Log is an excellent choice for ingesting hot data, it does have some limitations that are difficult to overlook:

- The retention period is only eight days, and this retention can not be increased. After that, it will either be deleted or archived

- KQL language access is limited, for a list of what operators can be used, please see here

- There is a charge for interactive queries ($0.005/GB-scanned)

This is the first post in this Sentinel Cost Optimization series. Hopefully, this will help you have another choice to consider when setting up and sending your custom log to Sentinel.